The solutions that are mentioned in this article work for any Linux distribution: Ubuntu, Red Hat, Open SUSE, CentOS, Debian, Oracle Linux, etc. However, the package installation commands may differ slightly.

The question of regular backups eventually comes up for any database management system. PostgreSQL is no exception. As a rule, the pg_dump utility, which is part of the PostgreSQL client, is used to create a hot backup.

In all the examples below, the postgres user will be utilized for working with pg_dump. You can specify a different user by using pg_dump -U <username>, and the password can be transferred with the environment variable PGPASSWORD=<password>. If you plan to connect to a non-local server, use the -h parameter to specify the host name.

Minimal solution

To perform a backup with this utility, just call it and specify the destination file:

su - postgres pg_dump -U postgres db_name | gzip > backup_file.gz

You can add a cron job to run backups regularly. To this end, follow the steps below:

-

- Create a backup storage folder

sudo mkdir -p /backups/postgresql

- Provide the postgres user permissions to the directory

sudo chown postgres /backups/postgresql

- Set the PostgreSQL user

su - postgres

- Open crontab by running the command

crontab -e

- Add this line at the end, replacing db_name with the name of your database

0 0 * * 0 pg_dump -U postgres db_name | gzip > /backups/postgresql/db_name.gz

- Create a backup storage folder

This way, a backup will be performed on your server at midnight on Saturday. This is the most basic solution to a backup task.

Run the following commands to restore the database.

-

- Set the PostgreSQL user

su - postgresl

- Recreate the database if it exists

psql -c "drop database t1" && psql -c "create database t1;"

- Restore the database from the archive

gunzip -c /backups/postgresql/db_name.gz | psql db_name

- Set the PostgreSQL user

Extended solution

A good solution should at least encompass the following aspects:

- Creating a backup with a unique name

- File compression

- Encrypting the compressed file

- Receiving email notifications concerning backup results

- Deleting old backups

The official PostgreSQL wiki offers a good script for solving these tasks. However, the disadvantage of this script is the lack of email notifications.

To create backups with email notifications, you can use the script provided below. But before that, you need to install postfix mailutils. These are the steps to do this in Ubuntu:

sudo apt-get update sudo apt-get install postfix mailutils

Also, please note that this script must be run by the postgres user. To log in under this user, run the command:

su - postgres

The most basic method for running the bash script for regular backups is shown below. In the beginning of the script, specify the backup storage directory, email notification address, and backup storage period.

# Database name db_name=pagila # Backup storage directory backupfolder=~/postgresql/backups # Notification email address recipient_email=<username@mail.com> # Number of days to store the backup keep_day=30 sqlfile=$backupfolder/pagila-$(date +%d-%m-%Y_%H-%M-%S).sql zipfile=$backupfolder/pagila-$(date +%d-%m-%Y_%H-%M-%S).zip #create backup folder mkdir -p $backupfolder # Create a backup if pg_dump $db_name > $sqlfile ; then echo 'Sql dump created' else echo 'pg_dump return non-zero code' | mailx -s 'No backup was created!' $recipient_email exit fi # Compress backup if gzip -c $sqlfile > $zipfile; then echo 'The backup was successfully compressed' else echo 'Error compressing backup' | mailx -s 'Backup was not created!' $recipient_email exit fi rm $sqlfile echo $zipfile | mailx -s 'Backup was successfully created' $recipient_email # Delete old backups find $backupfolder -mtime +$keep_day -delete

You can schedule this script to run regularly as shown here, but instead of calling pg_dump, specify the path to the script.

SqlBak

Creating a script is not the only way to automate backups. You can use a cloud backup service such as SqlBak.

SqlBak provides a wide range of features for automated PostgreSQL database backup. The process of creating a backup in SqlBak can include the following steps: creating, compressing, encrypting, sending to cloud storage, sending notifications and restoring on another server.

This service allows you to create backups of not only PostgreSQL, but also MySQL and MS SQL Server databases.

Installation and Registration

The application is installed by standard packet managers of the operating system. Instructions for installing and registering the packet for your OS can be found here.

The installation of the SqlBak app on Linux is very simple, more details on how to do it can be found here.

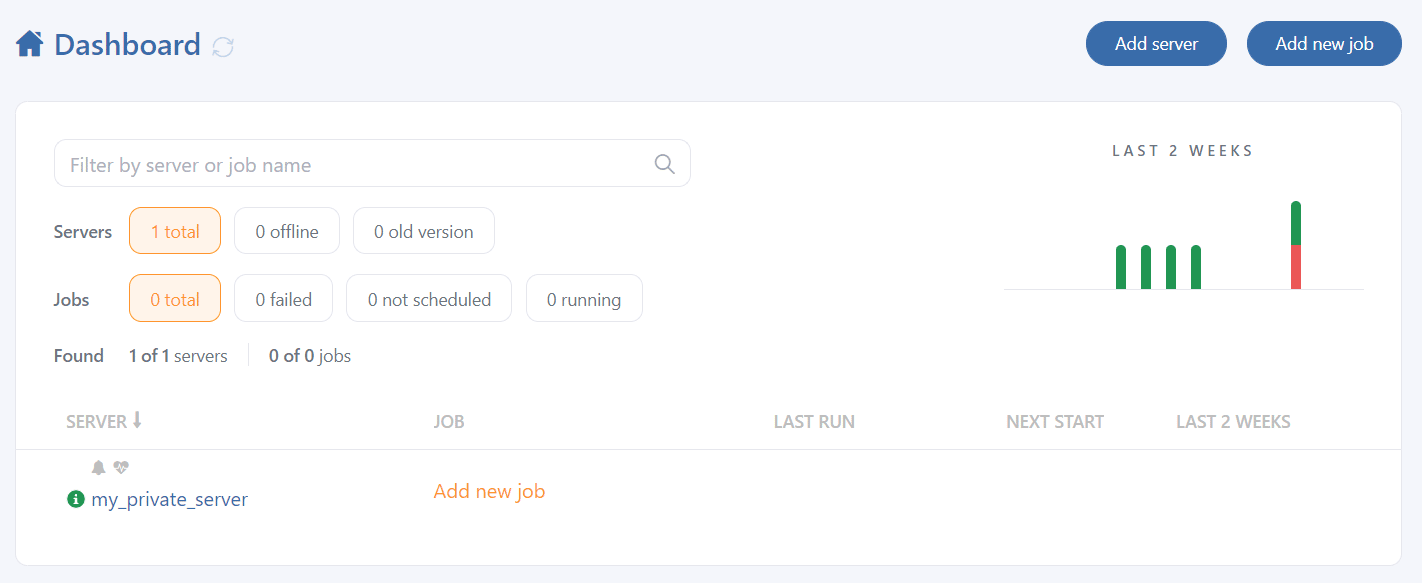

After registration, your server will appear on the dashboard page.

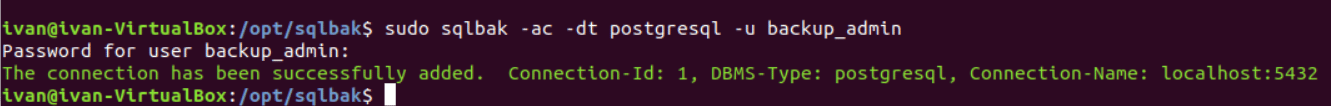

You will then need to add a connection to the PostgreSQL database:

sudo sqlbak --add-connection --db-type=postgresql --user=postgres

This completes the SqlBak setup on the console.

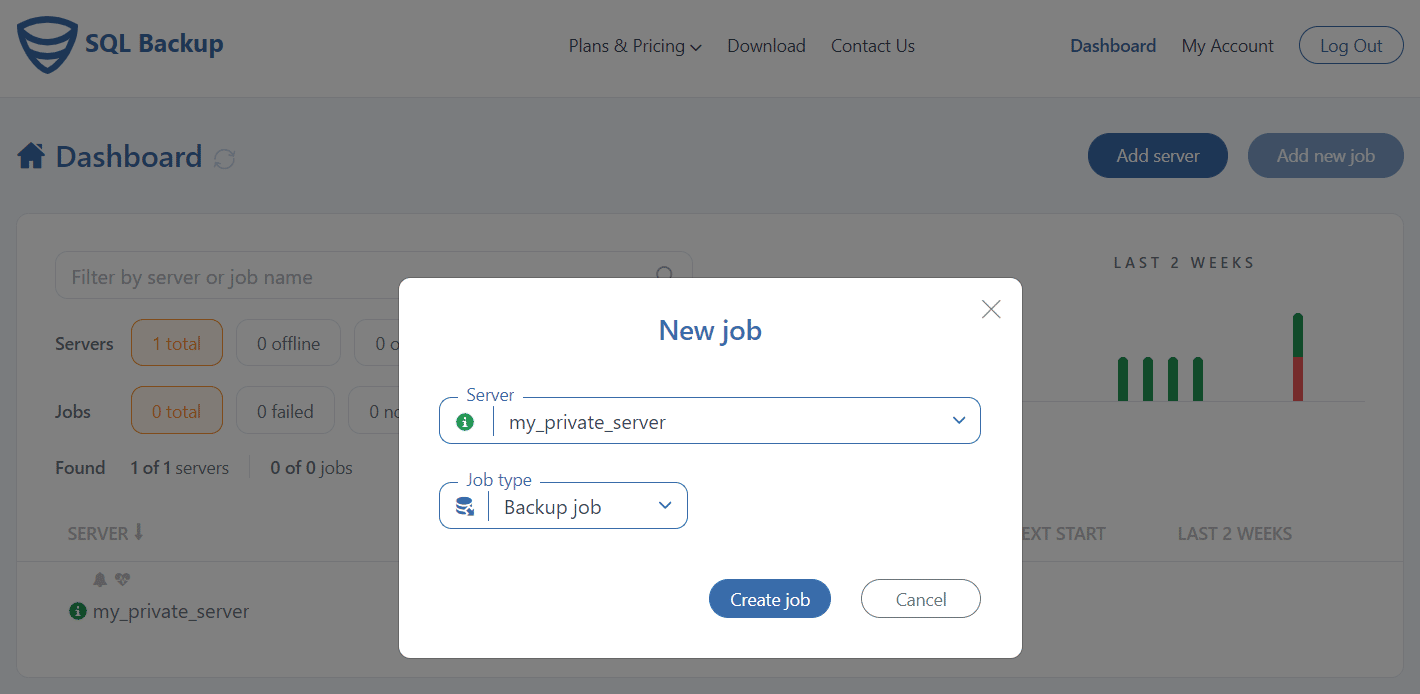

Now you can create a backup job on the dashboard page. Here you need to click the “Add New Job” button, and then “Create Job.”

After that, you will see the interface for creating backup jobs. The first two steps will be filled in by default.

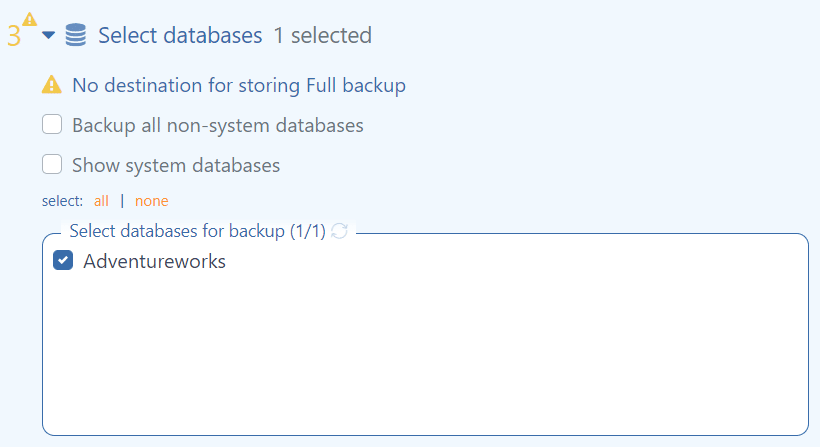

The first thing you must do here is specify in the “Select Database” section the databases you need to save. You can also select “backup all non-system databases” to back up all non-system databases.

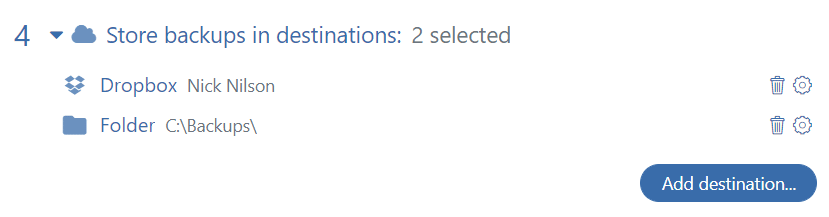

Then you need to specify the location where you want the backup to be saved. This can be specified in the “Store Backups in the Destination” section. You can choose Folder, FTP, Amazon S3, GoogleDrive, DropBox, Azure Blob Storage, or OneDrive. Please note: you can select multiple save locations at once, such as local FTP and DropBox.

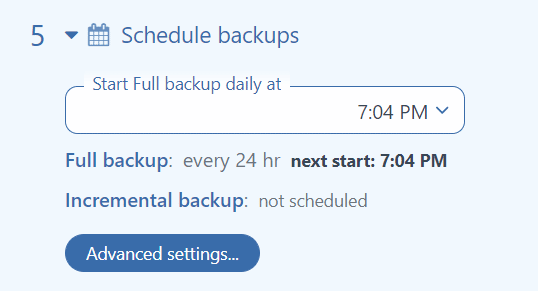

In the “Schedule Backups” section, you can specify the time when the backup must be run daily. If the 24-hour backup interval does not work for you, you can specify an advance run settings in the “Advanced Settings”, like in cron.

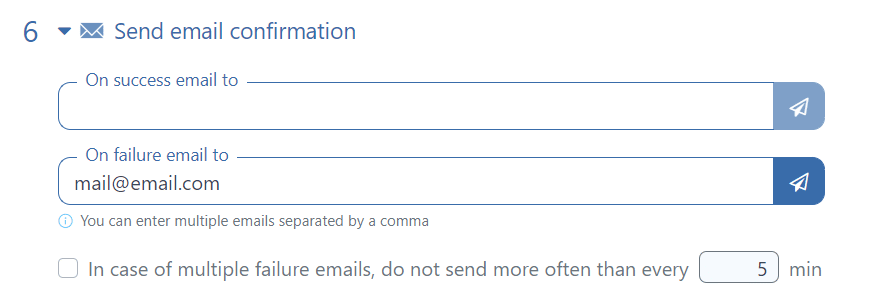

The “Send Email Confirmation” section allows you to configure email notifications. You can specify multiple addresses separated by commas. You can check immediately if the notification was sent by clicking the airplane button.

On the backup settings page, you can find many more interesting and useful settings, such as: “Execute After & Before Scripts,” “Encryption,” and “Backup Folder.”

SqlBak allows you to perform restore tasks on another server immediately after backup. This can be specified in the “Restore Tasks” section. This can be useful if you need to have an up-to-date database for testing.

To save your settings and start working on a schedule, click “Save & Exit.”

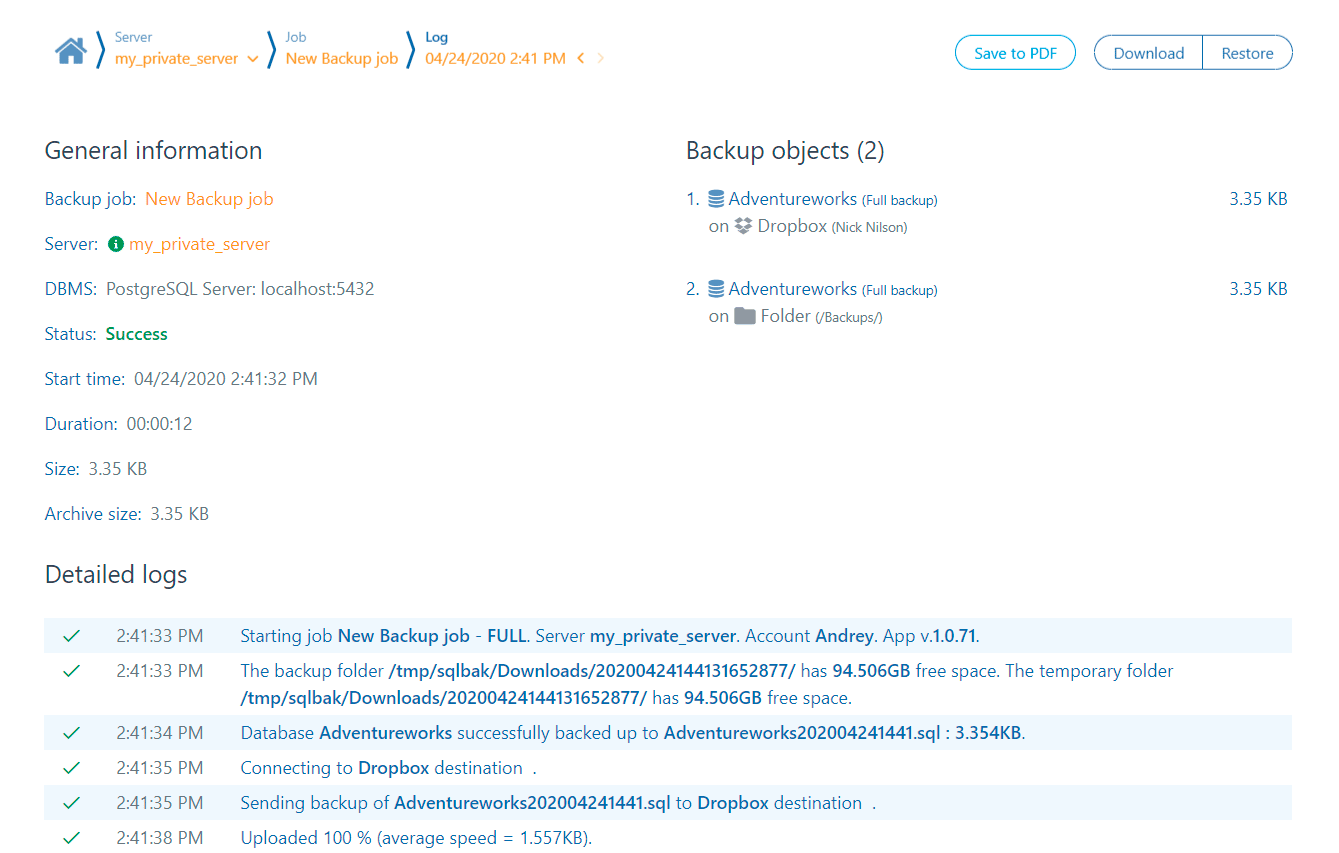

Clicking on the “Run Now” button will immediately start the job and show you the job progress log.

in the script

the line if

gzip -c $zipfile > $sqlfile

should be

if gzip -c $sqlfile > $zipfile;

Hi Samuel,

Oh, really, sorry. We have fixed it, thank you very much!

Very good blog,thank so much for your time in writing the posts.

Really good site,thank so much for your time in writing the posts.

Nice post, thank you.

How to get a last 2 days backup …?

Hi Sahana,

You can set the auto-delete option to remove your old backups at your backup destination settings at the “Auto delete old backups after” section.

Can I change pg_dump to use a custom format instead of plain ?

Hi Vinícius Pádua,

Could you please specify the following parameter:

–format=custom

when calling pg_dump in a script

In SqlBak, for the time being, there is no such option.

Hello,

Thank you for such a great tool. I haven’t finished my setup yet but I believe it will be very handy for my use case.

I intend to backup my digitalocean postgres database. I run the command

sudo sqlbak -ac -dt postgresql -h -u doadmin -p -P

But I get the error below

Handling a console command has failed. Failed to add DBMS connection. “psql: error: FATAL: database “postgres” does not exist

On digitalocean, there is no database named postgres, and there is nothing in the sqlbak reference that talks about specifying a database.

What do I do?

Hello,

Could you please try to use –database=mydb or -d mydb ?

Thank you and sorry for the inconvenience.

How can one back up all posgres dbs to a single file based on the above script?

Hi Henry Mutegeki,

Yes, you can do it, please change “pg_dump $db_name” to “pg_dumpall”.

If I just want to the file format to be zip file and have no conversion or compression done. can I do it and How ?

Hi Farhan,

You can find all the compression settings at the “Compress backups” during your backup job settings. Also, you can try to set the “Compression level” to “None”.

I’m using this

0 0 * * 0 pg_dump -U postgres db_name | gzip > backup_file.gz

this way my backup is empty. What could be wrong?

Hi Arthur,

This is probably due to the fact that you specified a non-existent database name “db_name”

In this blog post, we assume that db_name will be replaced with the name of a specific database on your server.

If you want to backup all databases on the server please change “pg_dump $ db_name” to “pg_dumpall”.

Hi. I am new to this thing. Can you please explain the following line?

password can be transferred with the environment variable PGPASSWORD=

Hi Haris,

This means that the password can be passed to the pg_dump utility via an environment variable called PGPASSWORD

Any way, how to restore the file backup like “all-database-08-03-2024_09-20-18.sql”

Is this command possible?

“pg_restore -U postgres -d backup_db < all-database-08-03-2024_09-20-18.sql"

Hello Fazlur,

No, when using the pg_restore utility, the database parameter should not be passed through stdin redirection but rather as the last parameter, like so:

pg_restore -U postgres -d backup_db all-database-08-03-2024_09-20-18.sqlAdditionally, you can restore a database using the psql utility:

psql dbname